YouTube and the Trust Deficit

2026-03-26 | 2026-05-01

Estimated Reading Time: 29 minutes

What YouTube once was

Once upon a time, producing a YouTube (YT) video was a laudable, time-consuming, and painstaking exercise. Actual human beings, with real names, sat or stood before a video camera and spoke something that others would want to hear, whether as news, or knowledge, or opinion, or entertainment. Their faces and voices carried authority and authenticity. They could be trusted.

And making such videos usually demanded several takes to correct for errors, ensure continuity, etc. In fact, making a YT video was daunting, not only because of the actual recording, but also because of the time-consuming post-processing. More often than not, it was a team effort.

Trust and YouTube

Nowadays, however, YT is awash with slick videos that offer seemingly authoritative advice on health, wealth, current affairs, and many other aspects of life. But if you were to look behind the curtain, all you would see is smoke and mirrors—not human experts. There is an uncanny resemblance to the revelatory scene in the movie, The Wizard of Oz.

This is especially annoying and alarming, and is typical of the devaluation of truth that we are currently experiencing. And I think that AI, in collusion with human frailty and fallibility, has facilitated this trust deficit.

AI and the respect for ideas and persons

The advent of AI has upset the apple cart of creativity. It is now possible—without much effort or expense—to simulate a living or dead person with a realistic AI-generated avatar that looks and sounds like that person. One person, or a very small team, can generate a spiffy-looking video with little effort and expense, in a short time.

The owner of a YT channel can now propound whatever he or she wants, using famous faces or voices to do so. This craven misuse of others’ personae has become normalized and can be, and is being, used to misinform, mislead, misrepresent, and of course, monetize.1 Lies can now roam the human mindspace with the same carefree impunity as the truth. Telling half-truths and lies has become normative and profitable.

To my knowledge, no AI company has bought the copyright to the complete, gigantic corpus of data on which the magical AI models have been trained, although some have licensed subsets of that data. The uncomfortable truth is that AI has been built on a model where ideas have been used without the explicit permission of their originators, and without compensation to them in the form of copyright royalties. Compare this with patents, and the stark divide will be obvious.

Since AI has itself dealt slyly with the intellectual property rights of others, what it spawns has facilitated the same slippery logic of usage without permission or compensation.

AI has thus become an instrument of deception. The fallible human being has harnessed the disproportionate power of AI to pull off stunts of deceit that would have been impossible a decade ago. Content creators can take cover behind the shield of anonymity, and worse still, masquerade as someone else, in the works they present to the world.

Monetization

Why would anyone go to all that trouble to make a video on YT available for free if not for some pecuniary gain from some source? And that gain—coming from advertisers though YT—is called monetization. The more the number of views your video gains, the better the reward. In the past, no one would have grudged a well-subscribed YT channel making a handsome sum every month, because that money would have been earned from some honest and strenuous elbow grease.

There are several categories of AI-assisted YT content that are particularly repugnant to me. I will deal with each of them in turn below.

Terminology

The word clickbait is a new coinage for the web and is a sign of our times. It usually features a thumbnail image that arrests a viewer’s attention and is often accompanied by a provocative title or link that embodies words like “Shocking” or “Latest” etc.

This is nothing new. Advertisers have been using the same modus operandi to capture customers for more than a century now. The takeaway from advertising is caveat emptor or “let the buyer beware”. In the marketplace of ideas, you have to be your own guarantor.

Educational videos

Richard P Feynman was a much respected, admired, and beloved American physicist with an impish sense of humour. His approach to Physics was profoundly original. His legendary Feynman’s Lectures on Physics are available for free online. They are valued and studied by interested students to this very day. Almost all of Feynman’s lectures were tape-recorded live when he first delivered them at CalTech.

Thanks to AI, content creators have now made available “video lectures” from Feynman on subjects ranging from how to learn, through how to integrate mathematically, to explanations of physics, and questions about aliens. They usually feature black-and-white videos of Feynman at a blackboard explaining something. But were these movies actually taken while Feynman was still alive?2

The wonder is that the long-dead Feynman has been resurrected and made to speak words that might or might not be factually correct or historically attributable to him. An image of Feynman is lip-synced with the words spoken in the video. Most convincingly, the voice sounds like Feynman’s, while he was alive. This abuse of the personae of deceased persons is repugnant to me, but possible today with AI. It raises many ethical and moral issues.

Sticky questions

Are those words what Feynman actually spoke? Or are they the words of the content-creator, claiming that those are words that Feynman would have said?3 What if there are inaccuracies? Worse still, what if Feynman never talked on the subject of the video while he was still alive? If this is mis-attribution, is it fair to Feynman or his estate? Can any estate meaningfully challenge false depictions in courts of law, given the number of YT videos being input daily?

The opacity of YT

Publications have strict guidelines about citations and attributions. Many a PhD has been revoked because of missed citations or downright plagiarism. But nowadays, the author of a YT channel may hide with impunity behind the opacity provided by YT and peddle opinion as fact. There is no quality assurance mechanism to determine whether what is put out as fact is indeed true.

AI-generated

Some websites do the viewer the favour of telling her or him that some of the content has been generated or enhanced by AI. But what exactly does that mean? Is the video fictitious, or factual, or worse, a mix of both? Is artistic licence used to generate the words in the video? Are any “facts” interpolated or made up? Is the channel owner presenting words that Feynman never said, as something he actually said, simply because the content-creator wants to promote a particular viewpoint or pet peeve?4

The Lure of Lucre

It all boils down to monetization. Never mind if Feynman never said it. Never mind if what is presented is inaccurate. All that matters is that the content-creator makes his or her money. And who is there to police it?

There is no guardrail, like price tags in a supermarket, or maximum retail price (MRP) for a product, to protect the innocent consumer from exorbitant pricing. YT cannot police all the videos on its site for factual accuracy. Nor can the estate of the late Feynman police the creative output of YT content-creators. So, where does the buck stop? Who is there to protect the unwary student? Has AI enriched education while simultaneously poisoning the lake of Truth?

“Feynman” Websites

I will end this part of the blog with comments on some example websites.5

For whatever reason, this channel does not exist now, but did when I bookmarked it earlier in the year. At the time of my last visit, it had several videos, each with Feynman’s face on the thumbnail. It claimed that it was using Feynman’s methods to explain subjects from Physics to aliens. The content-creator’s name was not revealed. Anyway, since the site is now offline, I have no other comments to make.

I do not know if this channel is the successor to the previous one. Its description is shown in the screenshot below.

The aims of the channel are nobly stated in Figure 1, but presuppose that the content-creator is the alter ego of the featured physicists, who knows how they will present the facts. Can anyone really guess how someone else will speak or teach? Can one assume the persona of another and glibly claim to teach like him or her? Is the unnamed content-creator claiming that he or she is implicitly channelling Richard Feynman?6 Is that at all possible?

Most importantly, although the images of the physicists Leonard Susskind and Richard Feynman appear on the thumbnails at the channel website, the author of the channel is unknown. I caution against such “productions” where the author remains in the shadows. It immediately raises the question “Why?”.

We know that the names of Susskind and Feynman are used because of their eminence, very possibly as clickbait. But even if the author is not as eminent, why the cloak of anonymity? The author should be proud to own up as someone who wishes to help students. And perhaps also make a statement that what is presented is the interpretation of the author on how Feynman might have explained the subject.

A channel with an unidentified author should always be approached with caution, unless it is a known corporate news channel or other institutional outlet. Unidentified authors are especially invidious when famous names are harnessed for clicks, whereas the author’s credentials and relationship, e.g., student, research assistant, etc., to those names are not revealed.

When I first came upon this channel—because its name had the word archives—I rejoiced thinking that at last I had found a site that was the authorized site of Richard Feynman, perhaps run by his estate. But alas, that was not the case. The author is anonymous. However well-meaning, why should the author remain anonymous if he or she has nothing to hide and everything to celebrate?

The description of the channel in Figure 2 claims that viewer will “[understand] the world as Feynman saw it”. I doubt that even Feynman would have made such a preposterous claim!

We each have our individual modes of viewing and understanding the world around us. Even a well-known equation might remain dormant until an Aha! moment reveals its hidden glow. This is an act of personal cognition. It might be facilitated from without, but the illumination occurs from within.

I would approach such a site and its contents with abundant caution. How do we know what is true, and what is conjecture on this website? The best guide to Feynman’s thinking are primary documents like his Lectures and his own books, not the interpretation of an unknown author, however well-meaning he or she may be.7

Health Vidoes

Because human health and longevity are of interest to everyone, YT is bursting at the seams with videos on the subject. Swarms of content-creators have rushed to participate in this modern gold rush to monetize on health-improvement videos.

The only problem with this surfeit of advice is that its truth is largely suspect. All the drawbacks of AI-generated educational videos apply to health videos as well. And while mimicking Feynman might at worst mislead a physics student, improper or wrong medical advice could cause harm or even kill.

It has become commonplace for non-existent “doctors” to make health recommendations on YT, especially if supplements or other products are also hawked during the video. The images of these “doctors” are quite realistic, and their lip movements are effortlessly synchronized to their speech by AI.

The viewing public, innocent of the subterfuge being perpetrated, might take onboard such advice without exercising any customary scepticism.

It is vital for the health-conscious YT aficionado to check the information being dispensed against multiple, trusted sources before swallowing everything hook, line, and sinker. The next example is telling.

An Analog Fact-Fiction Mixture

Let me showcase a typical video in the senior-citizen health genre. Such a video is an accomplished mixture of fact and fiction. It exaggerates benefits, uses sensational framing to hook viewers, promotes lifestyle changes with a mix of partial truths and oversimplifications, promotes unverified claims to drive engagement, and pushes sales of a supplement, or guide-book, or related products.

If everything were false, the video could be easily debunked. A digital lie is usually absurd, and effortlessly recognized as such; it can be promptly countered or dismissed.

But an analog lie is based on half-truths. It is harder to recognize and refute. It hides at the subtle interface between truth and falsehood.

It becomes extremely difficult for the lay YT viewer to separate the wheat of established truth from the chaff of exaggerated claims.

Short-shelf life videos?

Many of the “newly sprouted” health videos are of very recent origin. They are the children of AI synthesis and mostly date from 2025 or 2026. The more successful ones attract a very large viewership in a relatively short time. They might also fade away into oblivion once sufficient profits have been made, or once enough complaints about them trigger action by YT.8

If they have been pulled by the time this blog is read, the reader might infer that I am alluding to non-existent YT videos. Hence, this blog has more images than usual. They are proof that the videos and the underlying practices were real, at least at the time of writing.

A video on diabetes

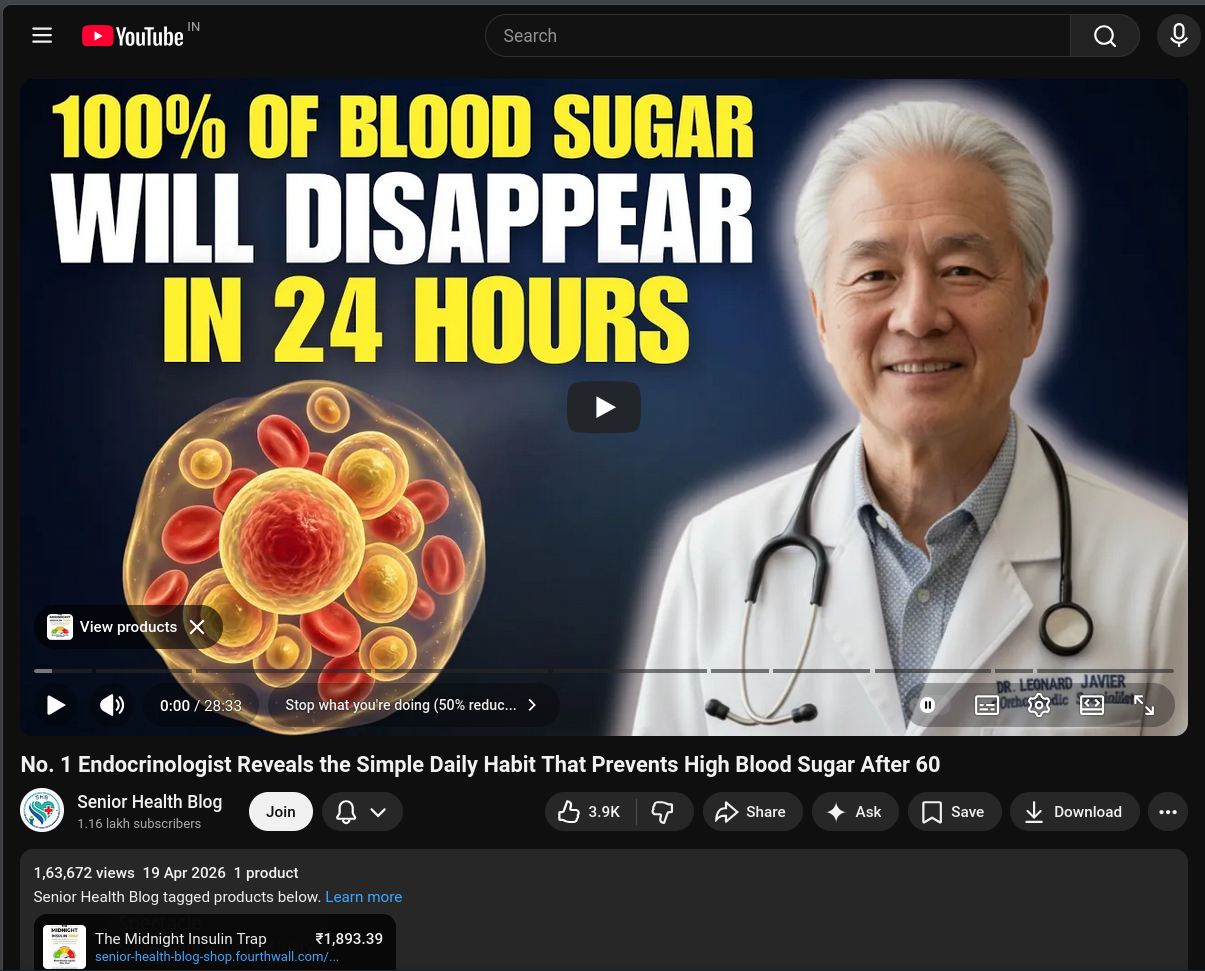

Let me now showcase a typical flawed video in the health genre [1]. Its thumbnail image is shown below in Figure 3.

Note the two textual clickbaits:

The text emblazoned across the top of thumbnail says “100% of Blood Sugar Will Disappear in 24 Hours.” Would the person not be dead if all his or her blood sugar disappeared? Obviously, the “author” Senior Health Blog lacks basic biological knowledge, let alone medical knowledge. But he or she is an expert on clickbaits: “100%” draws viewers.

The title below the thumbnail states “No. 1 Endocrinologist Reveals the Simple Daily Habit That Prevents High Blood Sugar After 60”. The selling point is that the venerable doctor pictured in the thumbnail is the “No. 1 Endocrinologist.” The channel is tacit on “where and when” the venerable doctor was the “No. 1 Endocrinologist”: perhaps these are moot details beneath consideration! But who is this expert? More on that later.

Both clickbaits reveal the stark ignorance of Senior Health Blog on health matters. This content-creator remains anonymous, save for his or her nom de plume. And this is what I object to the most. The person9 milking these videos for the money pails they yield, remains in the shadows, behind a veil of anonymity, and a wall of unaccountability.

Who is Dr Leonard Javier?

Take a look at Figure 3. The venerable grey-haired doctor is shown in his clinical white coat with his name embroidered in blue.

For weeks, I had been lapping up the advice given by Senior Health Blog, until I attempted to check on the identity of the “No. 1 Endocrinologist” Dr Leonard Javier. And the results were quite deflating. A web-wide search did not uncover a single endocrinologist named Dr Leonard Javier.

There is indeed a Dr Leonard Javier MD at the University of Philippines Manila and he is affiliated with the Department of Family and Community Medicine. So, he is not the person alluded to in the YT video.

Dr Leonard Javier, Endocrinologist at YT Senior Health Blog, does not exist.

He is a phantasm, a figment of the imagination, brazenly portrayed as an eminent medical doctor. His onscreen persona is AI-generated as is his voice. Only the Almighty knows who wrote the script, and perhaps even that was relegated to an AI agent.

Does the Senior Health Blog channel even faintly suggest that Dr Javier is AI-synthesized? Let us look at the channel description in Figure 4.

So, AI has been harnessed quite ably to deceive the viewing public into thinking they are getting solid, expert medical advice for free, when what is being dished out is suspect material by a non-existent medical doctor.

The only concession to truth is the legal disclaimer:

Legal:

Content is for educational purposes only. Always consult your healthcare provider before making changes to diet or exercise routines.

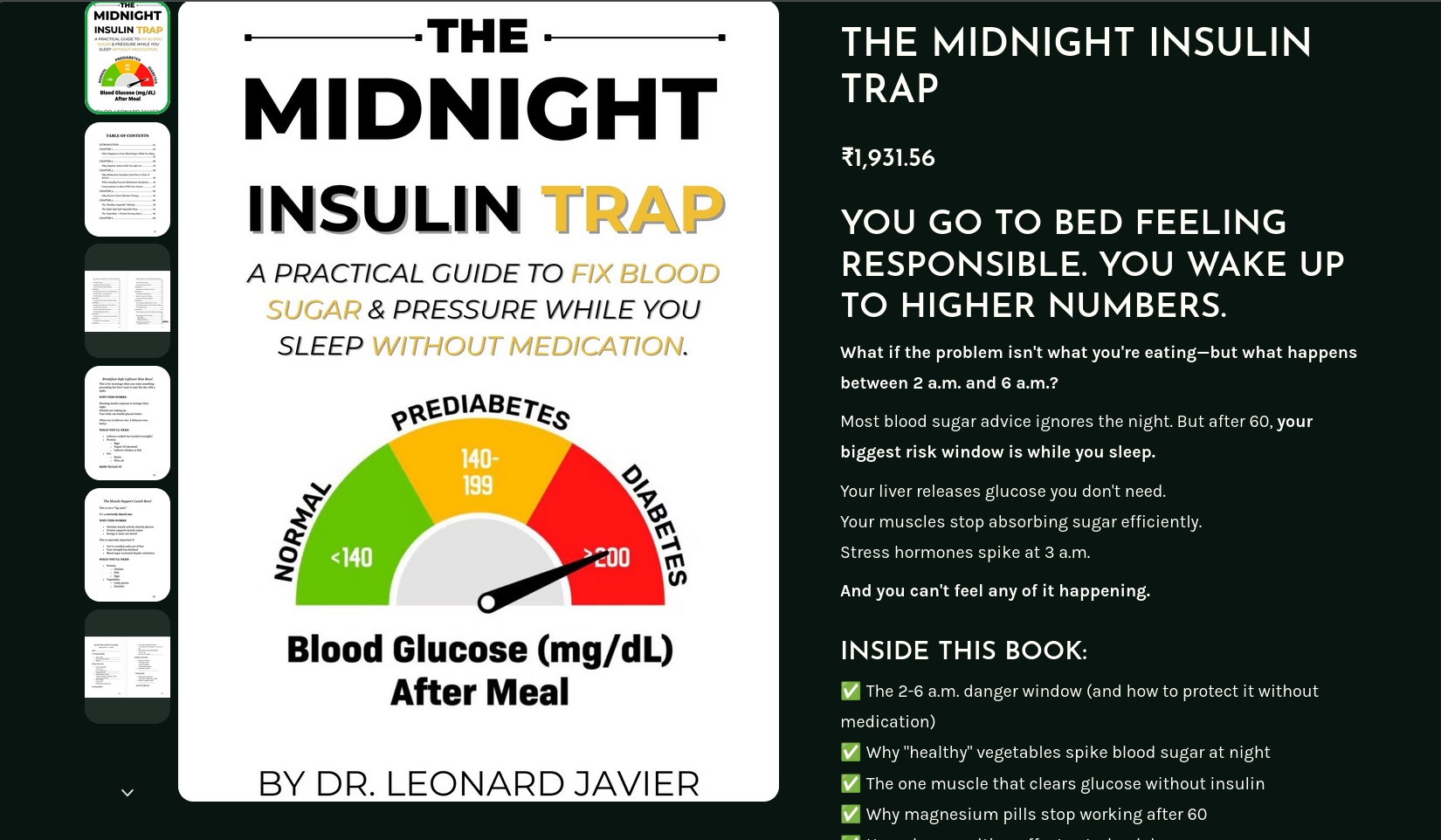

The sale

A YT health channel that does not hawk a product would be an oxymoron. True to form, this Senior Health Blog production comes with its own book recommendation, written by none other than the eminent Dr Leonard Javier! Figure 5 displays his authorship in all its glory! And at ₹1,931.56, the book is not cheap by Indian standards, either. The channel-creator is laughing all the way to the bank.

When I read the author’s name on the book, I felt like an actor in the movie The Matrix: caught up in an AI-conjured reality where norms may be warped.

The citations

An honest critique would not leave the references cited on the YT page unexamined. This video is to be commended because it provides citations, which are often omitted by many other health videos. But this veneer of respectability is flaky.

The six references are actually seven in number. The citations are riddled with errors. Even when they feature the correct title, authors, journal, and link, weak findings are presented as strong results. This is what I call analog lying. How could a layperson take the advice in the video wholeheartedly?

The takeaways

Overall, the video’s recommendations are sensible and very likely will promote better health:

Get sufficient high quality sleep every night.

A ten-minute walk after dinner is salutary.

Eat resistant starch, which does not raise blood glucose as much as starch.

The order in which a meal is eaten can affect blood glucose: eat proteins first.

Keep hydrated after waking up.

These habits—better sleep, post-meal activity, consuming resistant starch, eating proteins first, and hydration—are generally sensible and supported by research at least preliminarily.

But the video oversells the ease, speed, and magnitude of the benefits that will accrue, while presenting it as insider knowledge from a top specialist. This dilution of truth with falsehood raises disturbing ethical questions.

Disturbing Questions

The web page for Senior Health Blog makes no declaration that their videos are AI-concocted claptrap produced to earn money. There is no mention that the entire video has been fabricated—like a work of art or fiction—possibly by an industrial strength “AI content farm”.

Even the research citations suffer from AI hallucinations.10 What is the world coming to? Is this what we have inherited from AI? Or is human greed for easy money also to blame?

Who is culpable here? The channel has a legal disclaimer, shown in Figure 4, which is proper and correct. I have seen such disclaimers even in genuine videos where real-life doctors give information in areas where they are specialists.

But the larger question is philosophical. In a health-related video, with an anonymous, possibly non-human content creator, featuring a fabricated human-like persona, dispensing advice that is machine-generated, who is culpable in a case of fraud? Is there any accountability at any level? Or is the modern YT gold rush subject to its own rules of engagement, beyond the pale of ethics?

The verdict?

So, what do we do with the Dr Leonard Javier videos? Are they all suspect? Yes, they are. Is the information from them unreliable? Perhaps. Should we watch them? Only after knowing their limitations. Do they convey useful information? Possibly, at least through their clickbait titles, which are sure to interest a large population of viewers. The final verdict? The health-conscious YT aficionado must check the information being dispensed against multiple authoritative and trusted sources before accepting and acting on the give advice.

A mitigating example

There is another health advice channel featuring a “Doctor Laura”. But the folks running that channel have stated:

Disclaimer: Dr.Laura is an AI health educator and this channel is for educational purposes only and does not provide medical advice.

Always consult a qualified healthcare professional before making changes to your health, diet, or lifestyle.

One has to be grateful for small mercies in a world dominated by greedy sharks. But this comment alone does not merit my endorsement of this channel. Caveat emptor!

The real Mccoy

Thankfully, YT is not devoid of real-life doctors who share their knowledge to promote public awareness and health. Two among these are:

Website: https://drwilliamli.com/

Official YT channel: https://www.youtube.com/c/drwilliamli

Website: https://www.doctorjasonfung.com/

Official YT channel: https://www.youtube.com/@DrJasonFung

Website: https://www.saurabhsethimd.com/

Official YT channel: https://www.youtube.com/@doctorsethi

Website: https://drpalmanickam.com/

Official YT channel: https://www.youtube.com/@DrPal/featured

The links above are the authorized links they use to share their knowledge with the public at large. Note that medical research is rapidly evolving and that the best cutting-edge medical research can, and sometimes does, contradict previous prescriptions.

Current Affairs Videos

Respected commentators on international affairs like Professor John Mearsheimer and Professor Jeffrey Sachs have given interviews that are often featured on YT.

While Professor Sachs has his own offical channel, Professor Mearsheimer does not. Therefore, even more caution is required in filtering out false narratives attributed to Professor Mearsheimer.

The instantly recognizable voice and distinct intonation of Sir David Attenborough has been used to snare viewers and give a sheen of respectability to some AI-generated documentaries, which might have no connection with Sir David. This is another distasteful practice on YT.

We are living in an era where thieving happens openly. Truth is mixed with falsehood and sold for profit by entities that hijack eminent names, faces, and voices to do so. AI can make thieves of us all because AI itself is founded on the illegal use of copyrighted material. Alas, one theft has begotten another!

History Videos

My final example YT video is from the history genre. My son and I recently watched it, with AI-generated black-and-white flashback images, interspersed with commentary that sounded historic and authentic. As a story it was utterly riveting. We scarcely noticed the forty-seven minutes ticking away while we were engrossed in it. It was a story about the legendary valour of Gurkhas [2].

Always verify!

After the video ended, our curiosity got the better of us. We wanted to find out more about the courageous Gurkha soldier, Lal Bahadur Thapa, who had been awarded the Victoria Cross (VC).

And that was when the whole fabric of the video drama disintegrated like a fragile fabric exposed for long years to the sun. There was not a shred of truth in the video.

The operation at Monte Cassino in Italy on 13/14 June 1944 was entirely fictitious. The action which won Lal Bahadur Thapa his VC took place at Rass-es-Zouai, Tunisia, in the intervening night of 05/06 April 1943, for an entirely different reason than what the video had led us to believe. So, time, place, and event were wrong.

No Gurkha soldier was awarded the Victoria Cross (VC) specifically for an operation on Monte Cassino itself.11

By skilfully weaving two different narratives from two different campaigns12 into a tension-filled single dramatic war narrative, the AI generated a gripping WW II tale that would attract viewers and earn the channel-owner good money. The AI did its job well.

![Figure 6: A screenshot from the Gurkha video [2].](https://swanlotus.netlify.app/blogs/images/gurkha-video.png)

From Figure 6, it is clear that the channel Britain At Arms, located in the US, was able to garner 357,705 views13 of the video, since it was published on 06 February 2026.14 Big money was made, and quickly too! I wonder whom to credit for this: the anonymous channel-owner or the artful AI that generated this drama!

Assuming that the whole story was patched together from its humongous AI databases, one can surmise that a patchwork story fusing elements of two actual military operations could be woven together plausibly to construct a riveting narrative of battlefield courage and glory. Why not let AI do the fancy footwork and quietly rake in the dough?

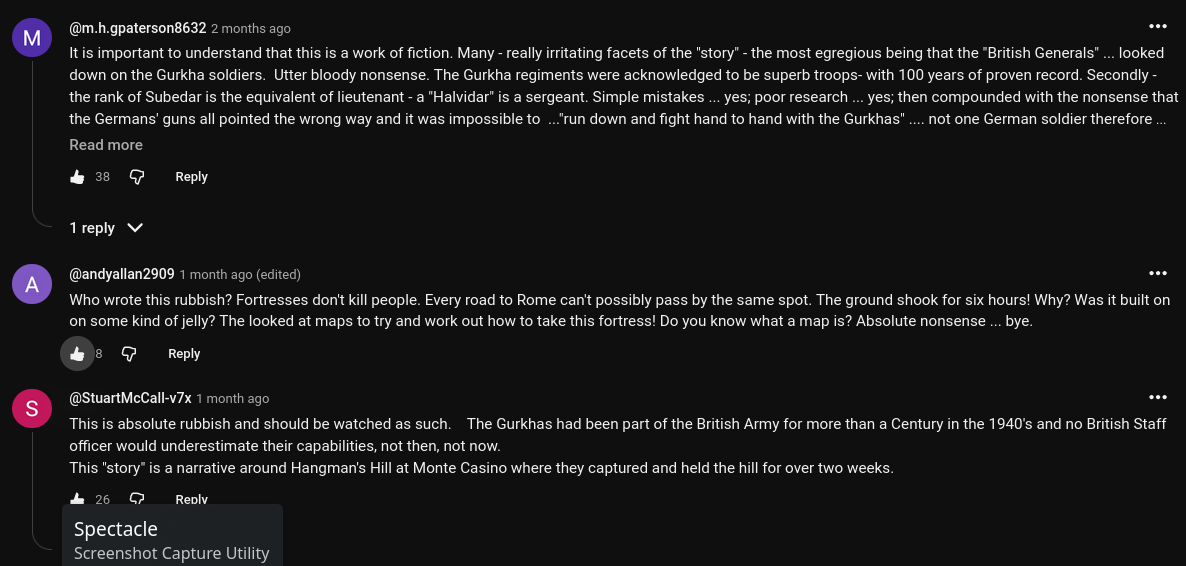

I must confess that just this once, I felt sorry that the depicted drama was made up. To confirm that it was untrue, I took a look at the comments, which often expose fabrications quite mercilessly.

The top three comments at the time I captured them in Figure 7, surely enough, roasted the drama as being a “work of fiction”, or “rubbish”. I rest my case.

Was dramatic licence dislosed?

The channel description for “Britain At Arms” does not indicate liberties with the truth or dramatic licence in the production of its videos. What it says seems to suggest that the recounted tales are little-known but true!

Britain At Arms explores the stories that built Britain’s reputation under pressure. Not just the famous victories—but the close calls, the quiet missions, and the moments of nerve and invention that decided everything.

From cramped war rooms to freezing night patrols, we follow the people who carried the weight—soldiers, sailors, aircrews, and civilians—when there was no room to fail. These are the stories you don’t hear often… but never forget once you do.

Truth is the first casualty of the AI-generated gold rush on YT!

Ideological skewing

Not only commercial greed, but also ideology, might be powering such videos. So, the AI-generated or AI-assisted video might not be simply dispensing half-truths for monetary profit, it might also be propagating an ideology that is skewed toward a particular viewpoint.

Policing is impractical

One might ask, “Why don’t the august professors and medical doctors sue impostor channels with a ‘take down’ demand?”. I ask, “Would you have time enough in 24 hours to police the burgeoning world of YT channels and do your normal work?”.

Whose responsibility is it to police YT to separate the wheat from the chaff? Should YT do it? Should the channel owner do it? Or should a neutral enforcer do it? If no one does it, the viewer perforce has to do it. We live in a strange age where instant information comes as a mixture of fact and fiction, or fact and ideology, or fact and propaganda. Truth itself has been subordinated to generating wealth or peddling influence.

Self-attestation helps

The channel NutritionFacts.org in its description page claims that:

NutritionFacts.org is a science-based nonprofit organization founded by Michael Greger, M.D. FACLM, that provides free updates on the latest in nutrition research via bite-sized videos, blogs, podcasts, and infographics. We are strictly non-commercial, without any sponsors, ads, brand partnerships, or paid subscriptions. More than 2,000 videos on virtually every aspect of healthy eating are available online, and new videos and blogs are uploaded weekly. Our reach is global, with a Spanish-language site, Chinese platforms, and video captions in many languages.

NutritionFacts.org is a labor of love—a tribute to Dr. Greger’s grandmother whose own life was saved by improving her diet.

Videos-of-the-day are released Monday, Wednesday, Friday, and podcasts are released Thursday. Our videos are always ad-free. If you would like to support our work, visit https://nutritionfacts.org/donate/.

A cursory check indicates that the good doctor is a real person. So, the channel may be trusted. But as Ronald Reagan said, “Trust but verify”. Always!

Anatomy of AI-generated videos

Let me summarize the sorry state that YT has come to after AI-generated videos started being uploaded by those wishing to earn easy money whilst remaining unknown and unaccountable themselves. The schema is as follows:

A channel is started by an unnamed content-creator to make money by attracting viewers to watch his or her content.

An eminent person—be it a professor, medical doctor, or economist—is first selected and his or her form and voice are realistically simulated by an AI to produce extremely convincing totally synthetic videos.

The script is authored, not by the real person being simulated, but by an unknown entity that could either be the unnamed channel-creator, or an AI, based on data it has access to.

To increase the credibility of the video, citations are given to published literature. Such citations are often riddled with errors or inconsistencies, politely referred to as AI-hallucinations.

Unless the person whose image and voice are being synthesized has given explicit permission and endorsement for such use, this is theft of identity. Yet it does not seem to have brought about criminal charges or courtroom challenges.

In the absence of explicit endorsement, is it not theft, fraud, plagiarism, intellectual dishonesty, etc., if the unnamed content-creator is rewarded monetarily for these unethical, even criminal activities?

Is there no sense of human decency left?

It is especially immoral when channels use AI-generated versions of departed but respected iconic figures like Nobel Prize-winning physicist, Richard Feynman, to say things he might never have said in his whole life. This perversion of ethics will come back to haunt the technological oligopolies running the YT-AI-scene at present.

Fake videos 101

In our time-scarce lives, it helps immeasurably to identify fake videos before we spend time on them. Here are some major telltale signs:

Sensational claims in titles coloured yellow or red on black

Arresting thumbnails

Overly long videos that frustratingly repeat what has already been said, so that a two-minute explanation is stretched to twenty minutes.

Recycling of old material as new with little or no value added.

The comments are often a good guide to the authenticity of the channel.

The archetypal fake channel

I list below the characteristics of a typical fake channel so that caveat emptor is helped along with hints:

Channel author or content-creator remains anonymous.

A web search for the name of the “medical doctor” featured in a health video turns up null. There is no one by that name.

If the hand gestures and head nodding are repetitive, it is very likely that an AI persona is delivering the message. Once you note this be careful and cross-check what you have been told as fact or advice.

An eminent face or voice is used to promote legitimacy for the channel even though those faces or voices have not endorsed the channel or its content.

The entire video is narrated by an AI voice that skips on non-terminal word boundaries. That is, the narration, on occasion, terminates a sentence on the wrong word, leaving you wondering who wrote the script.

The content of the video is not factually accurate, and could be downright false.

The length of video is between two and ten times longer that necessary to convey the “facts”. This is usually evident in the comments section of the video where irate viewers will pinpoint the time signatures where the important information is given, and rue the time wasted.

There is no reference to published and available research papers in cases of health videos.

Viewer checklist

Verify the identity of the author

Beware of sensational thumbnails

Cross-check citations

Compare with trusted sources

The Solution?

AI is a double-edged sword. Just as AI generated the trust deficit, AI might also be used to determine the integrity of any channel. But this introduces an additional verification or filtration step into the process. If the viewing public does not mind the inconvenience of verification of authenticity, AI could be harnessed to adjudicate on the authenticity of videos. This is the practice of auctioneers, who establish the provenance of valuable objects before they are put on sale. Can YT provide video pre-filtering to accomplish something similar?

Feedback

Please email me your comments and corrections.

A PDF version of this article is available for download here:

References

As we shall see later in this blog.↩︎

My understanding is that seven movies were made of Feynman lecturing at Cornell. A total of 122 of Feynman’s CalTech lectures were tape-recorded.↩︎

Does the content-creator claim to be Feynman’s doppelgänger? And if so, on what basis?↩︎

I am using Feynman as an archetype here. This applies equally to every other “suspect” video on YT where an anonymous author purports to be the voice of someone else, whether living or dead.↩︎

I have no ill will against any of these and subsequent example websites. I am simply acting as the canary in the coal mine.↩︎

I do not mean a YT channel of course! 😉 .↩︎

I am not alone in calling this out. The charmingly entitled “Feynman slop on YouTube: aaarrrrrgh!” posted on 2026-03-15 takes a kindred view.↩︎

These videos have been fashioned to “take the money and run”.↩︎

Or more likely, AI.↩︎

AI hallucination refers to confident but false, illogical, or fabricated outputs generated by AI models, particularly Large Language Models (LLMs).↩︎

However, the 1st Battalion of the 9th Gurkha Rifles (1/9GR) notably held the precarious “Hangman’s Hill” position, located about 250 meters from the famous monastery, at Monte Cassino for ten days in an extraordinary act of bravery. This was the closest the video came to the truth.↩︎

Or perhaps, even more than two!↩︎

At the time the screenshot was captured.↩︎

Most such videos have publication dates in 2025 or 2026.↩︎